An Ode to Red Teaming

“For a successful technology, reality must take precedence over public relations, for Nature cannot be fooled.”

Richard Feynman wrote that in his appendix to the Rogers Commission report on the Challenger disaster, which was an institutional failure before it was anything else. Engineers at Morton Thiokol had identified the O-ring problem months earlier, quantified the risk, and on the night before the launch recommended explicitly against flying in cold weather. NASA inverted the burden of proof - instead of demonstrating it was safe to fly, the engineers were asked to demonstrate it was unsafe - and when they couldn’t meet that impossible standard with the data they had, management overruled them. Seven people died the next morning because the institution’s own incentive structure, its schedule pressure and political commitments and the raw human discomfort of being the person who stops the show, made it unable to act on a correct analysis delivered through proper channels by the people who knew the most. This is the failure mode that matters most, and it is the common case - Pearl Harbor, the Yom Kippur War, the 2008 financial crisis, the pre-9/11 intelligence picture.

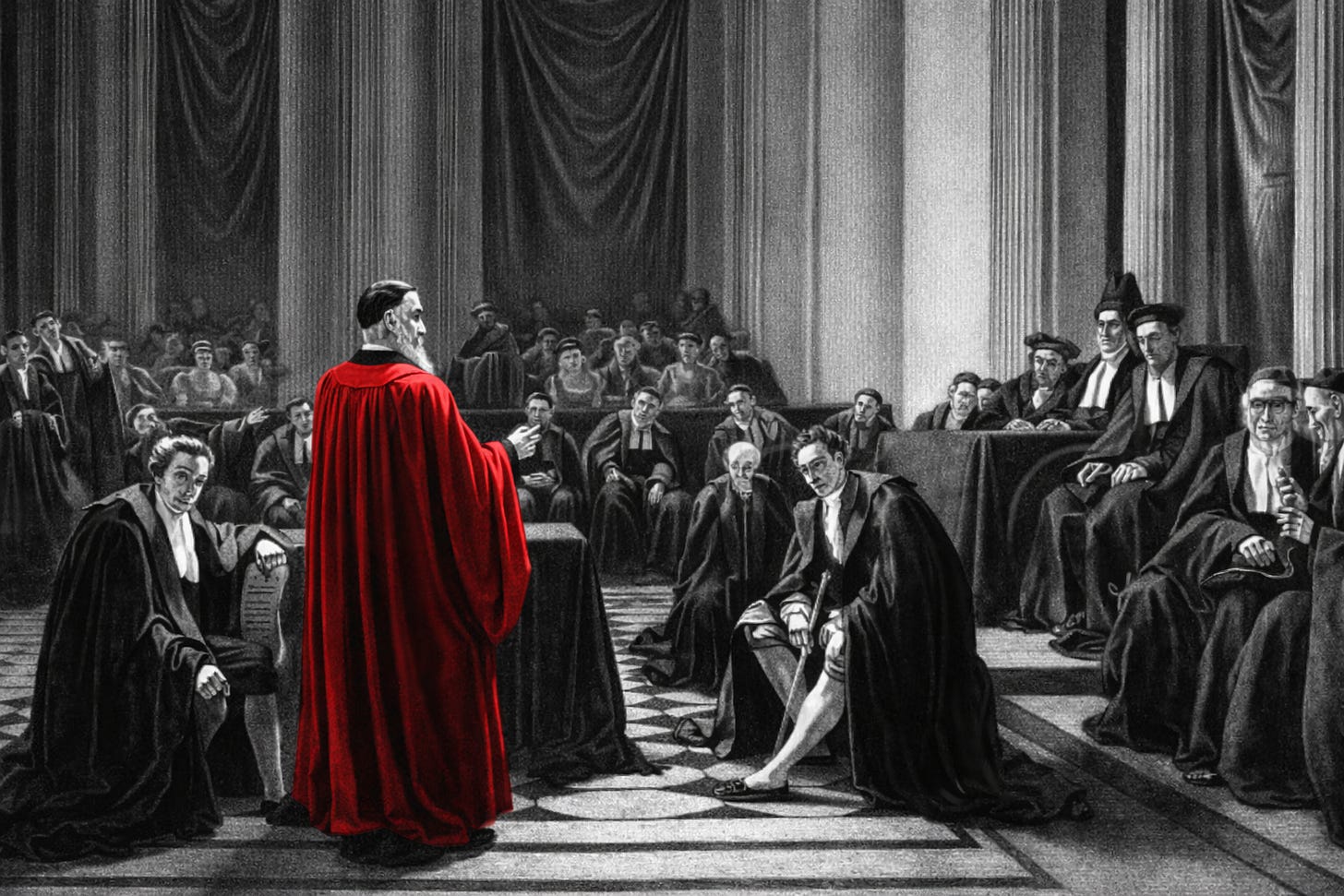

The practice of red teaming - structured adversarial analysis of your own plans and systems - exists to prevent exactly this, and institutions have been reaching for it for a very long time. The Catholic Church paid a full-time Devil’s Advocate for four centuries, and after the Yom Kippur intelligence failure nearly destroyed Israel, its military created an office staffed by analysts who could not be penalized for arguing against the prevailing assessment. The principle is always the same - make disagreement structural, obligatory, and protected. Bravery doesn’t scale.

I think this practice is about to become the most important capability any organization possesses. The coming shift is a fundamental move from static analysis to adversarial systems, and the organizations that embrace it - that are genuinely willing to hear what those systems find - will build things that are fundamentally better than what exists today.

An Adversary That Can Think

On February 5th, 2026, Anthropic disclosed that their newest AI model, given a sandboxed virtual machine with standard debugging tools but no specialized instructions, discovered more than 500 previously unknown high-severity vulnerabilities in some of the most heavily tested open-source software on earth - by reading the code and reasoning about it.

What matters here is how the model found them.

The codebases in question - GhostScript, OpenSC, CGIF - had automated fuzzers running against them for years, accumulating millions of hours of CPU time, and the bugs had still survived. Fuzzers are static analysis in spirit: they throw random inputs at software and check for known categories of failure, which makes them important but fundamentally unintelligent, a battering ram that can only find what brute force can reach. The model did something categorically different. It studied Git commit histories, read past security fixes, reasoned about whether similar errors might exist elsewhere in the codebase, and for one vulnerability involving LZW compression it wrote its own proof-of-concept exploit - which required understanding the compression algorithm and constructing a specific sequence of operations that no amount of random input generation would ever produce.

This is the distinction that matters. Static analysis - checklists, compliance audits, rule-based scanning, fuzz testing - asks whether a system has any of the failure modes we already know about, and that’s valuable; it should be table stakes for any serious organization. But the failures that actually kill organizations are almost never the ones they knew to check for. They are the flaws hiding in the gap between what a system was designed to do and what it actually does under conditions nobody anticipated, the assumptions that felt like facts until they weren’t. Fuzzers ran against those codebases for millions of CPU hours and missed what reasoning found in days.

This is adversarial analysis in its purest form, and the core operation is the same in every domain: learn the physics of a system - its rules, its assumptions, the constraints its designers believed would hold - then look at every actor and component within that system and reason about how those rules can be violated, circumvented, or revealed to have never been true in the first place. The system happened to be C code, but the same operation applies anywhere you find complexity sitting on top of unexamined assumptions - a financial model, a supply chain, a clinical protocol, an organizational restructuring. Every complex system has the equivalent of buffer overflows, assumptions baked into the design that hold true until they don’t, and while static methods will catch some of them, an adversary that can think will catch the ones that matter.

Logan Graham, head of Anthropic’s Frontier Red Team, put it plainly: “The models are extremely good at this, and we expect them to get much better still.”

Claude's depth in code makes it a natural adversary for software systems; Grok, trained alongside SpaceX and Tesla data, will prove exceptionally good at interrogating physical ones. But the specialization is probably temporary — as these systems learn to internalize the actual physics of any domain they're pointed at, the rules and constraints and the ways things break, the distinction between software red team and hardware red team starts to disappear into a generalized systems red team.

A year ago, AI in security contexts was compared to a talented undergraduate; today it is finding bugs that survived decades of expert analysis, and that leap took twelve months. It is not decelerating. The scarcity that has always constrained red teaming - the rarity of people who can genuinely think like adversaries - is dissolving, and with it every excuse organizations have used to defer this work.

And here is the part that makes this a matter of necessity as much as opportunity: these tools are becoming accessible to everyone. The same capabilities that let a Fortune 500 company run continuous adversarial analysis against its own infrastructure will be available to a ten-person startup, an independent security researcher, a competitor, and a regulator. This is, on balance, a very good thing - it means that resilience is no longer a luxury reserved for organizations with massive security budgets. But it also means your systems will be subjected to adversarial analysis whether you initiate it or not, by people with various motivations, and the organizations that get ahead of this will be the ones that chose to find their own flaws first.

The Honesty Problem

But technology is only half of the problem, and it’s the easier half.

Adversarial analysis, by its nature, produces structural findings, and structural findings are the ones institutions are worst at hearing. A static analysis tool tells you that a specific input isn’t validated - a bounded problem with a bounded fix. An adversarial system tells you that your trust model is wrong, that your architecture assumes a condition that doesn’t hold in practice, that two subsystems interact in a way nobody considered when they were designed independently. These are design problems, and they implicate decisions made months or years ago by people who are still in the room, still in charge, still invested in the framework they built.

This is why adversarial findings are so much more valuable than static ones and so much harder to act on. Nobody’s identity is threatened by an unvalidated input field. A finding that says your core architecture rests on a flawed assumption - that’s expensive to fix, embarrassing to acknowledge, and threatening to the people whose judgment it questions. The institutional instinct is to reclassify these findings: theoretical, edge case, something to address in the next version. This is exactly what happened at NASA the night before Challenger - the structural concern that O-rings lose resilience in cold weather was reclassified as an acceptable risk by people who had the authority to do so and every incentive to keep the launch on schedule.

The more powerful adversarial tools become, the more structural findings they will produce. An AI that can reason about how systems fail will surface architectural flaws, broken assumptions, and design decisions that seemed right at the time and aren’t - including the ones that are hardest and most expensive to fix. Organizations that deploy these tools and then quietly file away the uncomfortable results will have spent money to learn they were wrong and then chosen to stay wrong, which is worse than ignorance, because now the knowledge exists on a record somewhere, waiting for the post-mortem.

So the organizational requirements follow directly. The red team must be independent - it cannot report to the people who built the system. Findings must be binding: remediate, accept the risk on the record, or explain specifically why the finding is invalid. Adversarial thinking must be present from the beginning, at the design stage, when a structural flaw costs you a conversation rather than a quarter. And with AI-driven analysis, testing must be continuous - systems change daily, and a quarterly red team exercise belongs to another era. None of this is new in principle. The ancient Sanhedrin, the high court of Talmudic Jewish law, required that the court find arguments in the defendant’s favor - a procedural obligation, not an optional courtesy - and if all 23 judges voted to convict, the verdict was thrown out, because it proved no one had fulfilled that duty. Before a judge could even serve, he had to demonstrate he could argue persuasively for something the Torah explicitly forbade. The insight that dissent must be built into the structure - that you cannot rely on individuals to be brave - is two thousand years old. What’s new is the technology to act on it at scale. The human role shifts from finding vulnerabilities to deciding what they mean - prioritization, context, risk tolerance, ethical weight. Discovery automates. Judgment stays human.

On Better Systems

The question worth asking is what becomes possible when organizations take this seriously.

If adversarial analysis runs at AI speed, the feedback cycle between “design something” and “discover how it fails” compresses from months to effectively zero. That fundamentally changes what you can attempt in the first place. You can afford to be more architecturally ambitious because you can test more aggressively. You can explore design spaces that were previously too risky because the cost of finding out you were wrong was too high and took too long. The organizations that figure this out will move faster, because the thing that used to slow them down - waiting for the red team - becomes the thing that accelerates them.

The goal is to build systems that are structurally sound from the beginning, so that the people building on them can move forward with confidence rather than perpetually firefighting critical flaws. The structural problems - broken assumptions, wrong trust boundaries - surface early and hit hardest, and once they’re addressed the severity of what remains drops off. Every major flaw found at the foundation level is one less thing silently undermining everything built on top of it.

This is where legacy will feel the most pain. Organizations running mature systems that were never subjected to serious adversarial analysis are about to discover, very quickly, just how many structural flaws have been accumulating under the surface. Remediating them after the fact, while the system is load-bearing, is orders of magnitude harder than finding them at the design stage. New entrants that build with continuous adversarial analysis from day one will ship on a foundation that some legacy organizations may never fully reach - and that structural advantage compounds with every iteration, because resilience begets trust, trust begets adoption, and the gap becomes visible very quickly to anyone who depends on these systems.

There is also an urgency here, because the asymmetry that has historically favored offense - attackers need to find one flaw; defenders need to find all of them - is about to be compounded by AI that searches at machine speed, and those tools will be widely and cheaply available to everyone. But urgency is a reason to start now, and the real reward for starting is what you get to build.

Imagine a system - a rocket ship, a piece of software, a financial product, an organizational process - that has been subjected to continuous adversarial analysis from the day it was conceived, with every architectural assumption tested, every trust boundary probed, every interaction between subsystems examined by an intelligence that never gets tired and never gets political. Flaws caught at the whiteboard stage, when fixing them costs a conversation. Structural weaknesses identified before they become load-bearing. The design evolving toward what works and away from what fails, informed by an adversary that has explored the failure space more thoroughly than any human team ever could.

That system would be genuinely resilient - resilient in the way that only comes from having been honestly stress-tested at every level. Organizations that build this way will produce things unlike what exists today: more trustworthy, more robust, more capable of handling conditions their designers didn’t anticipate, because something already asked “what happens if this assumption is wrong?” and the answer got incorporated rather than filed away.

We have the tools. The question - the same question institutions have been asking for centuries, and mostly getting wrong - is whether we’re willing to act on what they find.

The ones who are will build something worth trusting.